Elon Musk’s Hyperscale Telecom Infrastructure: Tesla Dojo, xAI Clusters Reshape Network & Power Demands

Elon Musk’s Hyperscale Telecom Infrastructure: Tesla Dojo, xAI Clusters Reshape Network & Power Demands

Source: Dgtl Infra analysis of Elon Musk’s data center operations for Tesla, X, and xAI, published August 4, 2024. The aggressive infrastructure build-out by Musk’s portfolio companies, particularly for AI training, is creating new hyperscale demand patterns that directly impact telecom carriers, colocation providers, and power grids.

Elon Musk’s ecosystem of companies—spanning Tesla, X (formerly Twitter), and the AI startup xAI—is emerging as a formidable force in hyperscale infrastructure, with profound implications for the telecom and data center sectors. Unlike traditional cloud providers, Musk’s vertically integrated approach combines proprietary supercomputing hardware (Dojo), massive AI training clusters, and social media data pipelines, driving unprecedented demand for high-bandwidth, low-latency connectivity and gigawatt-scale power. This build-out is not just about compute; it’s a strategic reshaping of the underlying network and energy fabric required for next-generation AI, forcing carriers and infrastructure operators to adapt to a new class of customer with unique technical and geographical requirements.

Technical Deep Dive: The Musk Infrastructure Stack – Dojo, Grok, and AI Fabrics

The infrastructure demands stem from three primary, interconnected pillars: Tesla’s Dojo supercomputer, xAI’s Grok training clusters, and X’s real-time data platform. Each presents distinct challenges for network architects and facility operators.

Tesla’s Dojo Supercomputer: Announced at Tesla’s AI Day, Dojo is a custom-built, exascale-class system designed for video processing and neural network training for Full Self-Driving (FSD). Its architecture is based on the D1 chip, a 362 TFLOPs (BF16/CFP8) processor, with 25 chips integrated into a single training tile. Seven tiles form a cabinet, and multiple cabinets constitute an “Exapod,” which Tesla claims will deliver 1.1 exaFLOPs of performance. The critical telecom implication lies in its internal fabric: Dojo uses a proprietary, high-bandwidth, low-latency interconnect to manage the massive data flow between chips and tiles. While internal, this design philosophy underscores a move away from generic Ethernet/IP networks towards application-specific fabrics, a trend that influences co-location and interconnect strategies for AI workloads.

xAI’s Grok Training Clusters: Musk’s AI venture, xAI, is building one of the world’s largest GPU clusters to train its Grok large language model. Reports indicate the company is assembling a 100,000-unit cluster of Nvidia H100 GPUs. A cluster of this magnitude requires an immense, flat Layer 2 network fabric, typically based on InfiniBand or proprietary Ethernet variants like NVIDIA’s Spectrum-X, to prevent communication bottlenecks between nodes. The network spine for such a cluster can demand aggregate bisectional bandwidth exceeding 1 Petabit/sec. For telecom carriers, this means providing dense, low-latency cross-connects within data center campuses and high-capacity dark fiber links between facilities. The power draw is equally staggering, with a 100,000 H100 cluster estimated to require over 70 megawatts (MW) of IT load, escalating total facility power demand well beyond 100 MW when accounting for cooling and overhead.

X’s (Twitter) Real-Time Data Pipeline: The social media platform serves a dual purpose: as a consumer of infrastructure for its global user base and as a unique, real-time data source for training xAI’s models. This creates a closed-loop demand for high-throughput network links between X’s points of presence and the AI training facilities. The ingestion of real-time posts, images, and videos for model training requires sustained, high-volume data transfer, placing a premium on peering relationships and content delivery network (CDN) integration.

Industry Impact: Colocation, Power, and Network Operator Strategies

The scale and speed of Musk’s infrastructure deployments are forcing a recalibration across the infrastructure value chain. This is not incremental growth; it is a step-function increase in requirements.

Colocation and Hyperscale Campus Development: Traditional multi-tenant data center (MTDC) providers must adapt to the “gigawatt deal.” xAI’s reported search for 100+ MW blocks of capacity, potentially across multiple geographic regions, mirrors the procurement patterns of the largest hyperscalers (AWS, Google, Microsoft). However, Musk’s companies may exhibit less geographic diversification and a higher tolerance for locating in specific, power-rich markets like Texas or Nevada, where Tesla and SpaceX already have significant operations. This creates both opportunity and risk for operators in those regions, who must secure corresponding grid upgrades. The demand is also accelerating the development of “AI-ready” campuses with pre-provisioned high-density power (50 kW+/rack) and advanced liquid cooling capabilities.

Power Grid as the Ultimate Bottleneck: The single greatest constraint for these projects is electrical power. A single 100 MW AI data center cluster consumes roughly the same electricity as 80,000 homes. Musk’s ventures are actively engaging with utilities and exploring on-site generation, including potential integration with Tesla’s Megapack battery storage and Solar products. For telecom operators, this underscores the criticality of energy resilience for their own networks and highlights a potential new partnership avenue: co-locating network hubs and points of presence (PoPs) within or adjacent to these power-rich AI campuses to ensure ultra-reliable, high-capacity backhaul.

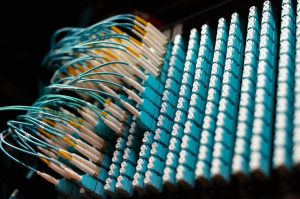

Network Operator Imperatives: The rise of massive AI clusters creates a new tier of wholesale network customer. Carriers like Lumen, Zayo, and regional fiber providers must cater to:

1. Intra-Campus Fabrics: Providing dark fiber or dedicated wavelength services for spine-leaf network architectures within a data center campus.

2. Inter-Facility Trunks: High-count fiber conduits between geographically dispersed training clusters or between data centers and corporate R&D sites (e.g., linking a Texas cluster to Tesla’s Palo Alto HQ).

3. Low-Latency Peering: Optimizing routes between these AI hubs and the public internet clouds (AWS, Google Cloud) for hybrid training workflows, as well as to major internet exchanges for data ingestion from platforms like X.

The traffic patterns are also shifting from the traditional north-south (user-to-data center) model to massive east-west (server-to-server) flows within a facility, requiring a rethinking of metro and long-haul network design to handle more distributed, any-to-any communication.

Regional and Global Telecom Implications: Focus on North America and Strategic Markets

The geographic footprint of Musk’s infrastructure build-out is heavily concentrated but has ripple effects globally.

North American Dominance with a Texan Core: The primary build-out is focused in the United States, with Texas emerging as a central hub due to its business-friendly regulation, available land, and growing (though sometimes strained) power grid. Tesla’s Gigafactory Texas and SpaceX’s Starbase in Boca Chica create a natural anchor. Major data center markets like Austin, Dallas, and emerging zones in West Texas are likely targets. This concentration will strain local fiber and power infrastructure, demanding significant investment from regional carriers like Crown Castle, AT&T, and utilities. It also positions Texas as a new, top-tier interconnection hub, potentially rivaling Northern Virginia and Silicon Valley for AI-centric traffic.

Global Network for a Global AI: While training may be centralized, inference and application of AI models developed by xAI and Tesla will be global. This drives demand for:

– Submarine Cable Capacity: Low-latency transatlantic and transpacific routes to serve international markets and sync data.

– Edge Computing Nodes: For applications like Tesla’s FSD, which may require regional data processing centers for fleet learning aggregation, creating demand for distributed edge facilities.

– African and MENA Considerations: While not a primary training location, regions like Africa are critical as data sources and future growth markets. Musk’s Starlink LEO satellite network provides a unique, vertically integrated backhaul solution for data collection and service delivery in underserved areas, potentially bypassing traditional terrestrial carriers. This represents both a competitive threat and a partnership opportunity for local mobile network operators (MNOs) seeking to offer AI-powered services.

Regulatory and Security Dimensions: The concentration of advanced AI compute capacity under the control of a single, high-profile individual’s portfolio may attract heightened regulatory scrutiny regarding data sovereignty, cybersecurity, and critical infrastructure protection. Telecom operators providing connectivity to these facilities will need to ensure their networks meet stringent security and compliance standards.

Conclusion: The Infrastructure Arms Race Enters a New Phase

Elon Musk’s ventures are catalyzing a new phase in the infrastructure arms race, one defined by the convergence of AI supercomputing, real-time data networks, and gigawatt-scale energy systems. For the telecom industry, this represents a significant source of future wholesale and enterprise revenue but also a formidable technical challenge. Success will require:

– Proactively developing high-density, low-latency interconnection products tailored for AI cluster communication.

– Forging deeper partnerships with power utilities and colocation providers to secure capacity in strategic, power-rich markets.

– Investing in network automation and software-defined networking (SDN) to dynamically provision the massive, any-to-any data flows that characterize AI training.

– Monitoring the vertical integration threat/opportunity posed by Starlink’s global LEO network as a potential alternative backhaul for AI data pipelines.

The era of AI-defined infrastructure is here, and its requirements are being written in real-time by the scale of projects like Dojo and Grok. Network operators that can provide the foundational fabric for this new compute paradigm will secure a pivotal role in the next technological epoch.